Key Principles to Web-Scaling a Network Moving to address I/O contention issues that can result when hundreds of Docker containers contend to access the same storage resources, Zadara Storage today announced that it has come up with a way to run Docker containers on top of its storage system rather than on a traditional server. […]

Key Principles to Web-Scaling a Network

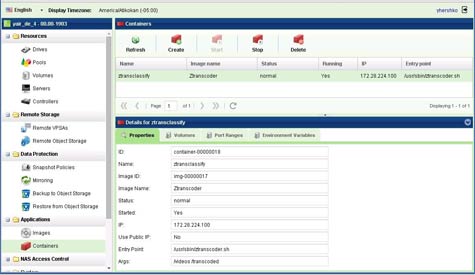

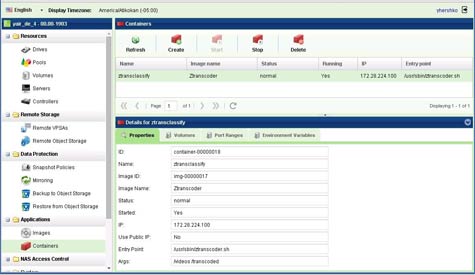

Moving to address I/O contention issues that can result when hundreds of Docker containers contend to access the same storage resources, Zadara Storage today announced that it has come up with a way to run Docker containers on top of its storage system rather than on a traditional server.

Zadara Storage COO Noam Shendar says running Docker containers directly on the company’s Virtual Private Storage Array not only dramatically improves I/O performance of those applications, it enables those applications to be easily backed up to a cloud service that supports the S3 application programming interface (API) developed by Amazon Web Services. The Zadara Container Service, says Shendar, is designed to provide access to an array that can be configured with multiple solid-state drives (SSDs) and hard drives, including 800GB (SSDs) and 6TB SATA disk drives.

Unlike traditional storage systems, the Zadara arrays are sold on an infrastructure-as-a-service model in which storage is paid for monthly based on how much data is actually stored. Zadara Storage then delivers the array to the IT organization, but all the management services for that system are provided via a cloud service. That approach, says Shendar, means that IT organizations get all the business benefits of a cloud model, while still being able to have access to high-performance local storage resources. Zadara Storage, meanwhile, provides any and all patches to the storage system and replaces any components that fail. Also included is a firewall that Zadara Storage provides.

It is unclear how many organizations will ultimately embrace an infrastructure-as-a-service (IaaS) model for storage. But at a time when many IT organizations will soon be asked to support Docker applications in production environments, the Zadara Storage approach offers an intriguing alternative. Most existing storage systems are not optimized for containers and trying to provide I/O access to hundreds of Docker containers running on a physical server can be problematic.

As a result, many IT organizations may soon discover that now is as good a time as any to not only rethink how they pay for storage inside their data centers, but also what actually needs to run where.

MV

Michael Vizard is a seasoned IT journalist, with nearly 30 years of experience writing and editing about enterprise IT issues. He is a contributor to publications including Programmableweb, IT Business Edge, CIOinsight and UBM Tech. He formerly was editorial director for Ziff-Davis Enterprise, where he launched the company’s custom content division, and has also served as editor in chief for CRN and InfoWorld. He also has held editorial positions at PC Week, Computerworld and Digital Review.