As a provider of an in-memory database capable of being deployed on graphical processing units (GPUs) rather than x86 processors, Kinetica really emerged as a new force to be reckoned with last year. Now, Kinetica this week is moving to make it simpler to invoke the parallel processing capabilities of GPUs by making available a […]

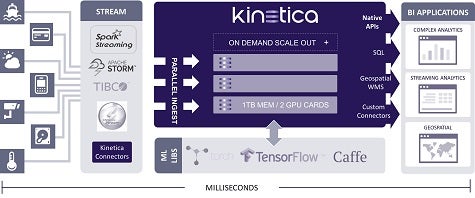

As a provider of an in-memory database capable of being deployed on graphical processing units (GPUs) rather than x86 processors, Kinetica really emerged as a new force to be reckoned with last year. Now, Kinetica this week is moving to make it simpler to invoke the parallel processing capabilities of GPUs by making available a range of third-party machine learning and artificial intelligence libraries along with its core database.

Eric Mizell, vice president of global solution engineering for Kinetica, says GPUs have quickly emerged as the preferred processors for running advanced machine learning and AI applications. By making available commonly used libraries such as TensorFlow, BIDMach, Caffe, and Torch, Mizell says, Kinetica is making it simpler for developers to build the next generation of applications running in memory.

“We’re trying to make it easier to ingest analytics data faster,” says Mizell.

Kinetica is essentially challenging two pillars of the IT industry at the same time. In partnerships with NVIDIA, the assumption that industry-standard Intel processors would dominate the application landscape in perpetuity is now being seriously challenged by low-cost GPUs that provide access to more processing power for certain classes of advanced applications. At the same time, both Oracle and SAP have made substantial bets on in-memory database that run only on Intel processors at a time when interest in GPUs inside and out of the cloud is on the rise.

Mizell notes that advanced applications that make use of machine learning algorithms and other forms of AI software need access to massive amounts of data. GPUs are becoming popular with developers of these applications because the parallel processing capabilities of GPUs make it possible to support these applications in a denser IT infrastructure environment that generates less heat, says Mizell.

Obviously, it’s still early days when it comes to handicapping which platform might ultimately gain the most traction in deploying advanced applications employing machine learning applications and other deep learning technologies. But early indicators are that the old IT guard that dominates data center environments today just might be in for the fight of its life.

MV

Michael Vizard is a seasoned IT journalist, with nearly 30 years of experience writing and editing about enterprise IT issues. He is a contributor to publications including Programmableweb, IT Business Edge, CIOinsight and UBM Tech. He formerly was editorial director for Ziff-Davis Enterprise, where he launched the company’s custom content division, and has also served as editor in chief for CRN and InfoWorld. He also has held editorial positions at PC Week, Computerworld and Digital Review.