Enterprises today are looking to make every aspect of their data center infrastructure more software-defined – with the ultimate goal of achieving the software-defined data center (SDDC).

According to Andrew Hillier, CTO of Cirba, the SDDC is not achieved by simply bolting together virtualization, software-defined networking, and software-defined storage technologies. Rather, it is an operational state, achieved by adopting a new way of managing and controlling all the moving parts within the infrastructure.

Critical to this control is having the ability to align the capabilities of the infrastructure (supply) with the requirements of the applications (demand). In this slideshow, Andrew offers four core elements to achieve this alignment and build the foundation for getting to the SDDC.

Building the Foundation for SDDC

Click through for four core elements needed to build the foundation for a software-defined data center, as identified by Andrew Hillier, CTO of Cirba.

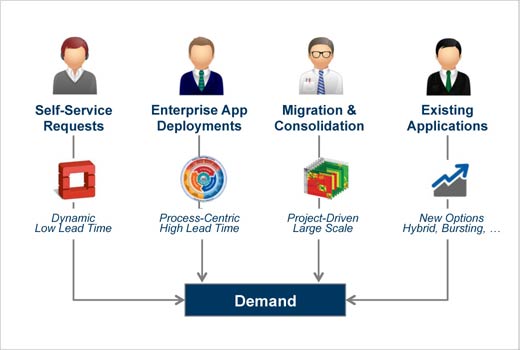

Demand Management

Much of the insight into the needs of applications exists, but has traditionally been used for procurement, not matching demands against existing infrastructure supply. This can be complex, as it requires the destination environment to not only have enough resources, but also the right kind of resources. Automating the analysis of both of these factors enables data-driven workload routing and capacity reservation, enabling IT to gain control over the pipeline of application demand.

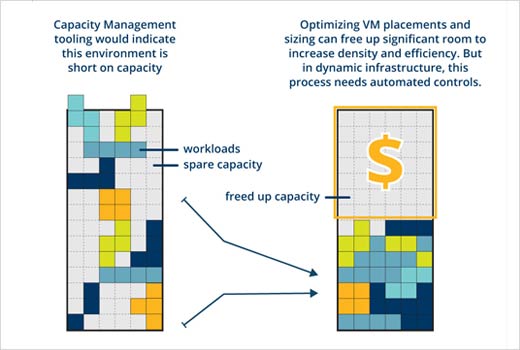

Automated Capacity Control

Traditional capacity management tooling is woefully inadequate in a world where the infrastructure is programmable and application demand is stacked on shared infrastructure. What is required is automated policy-aware control over sizing, allocation and placement decisions, which are the new control points in modern data centers.

Policy-Based Operational Controls

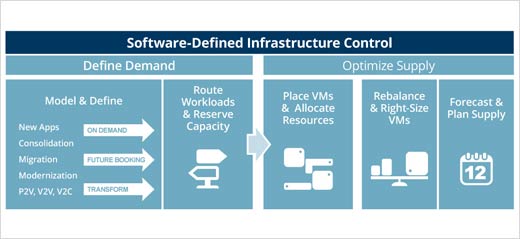

At the heart of becoming software-defined lies the ability to codify the operational policies that govern how supply and demand are matched, aligned, and controlled. Programmatically using these policies to plan and operate environments enables the same infrastructure to behave in different ways depending on its intended purpose. This is at the heart of software-defined operation.

Automation

Most organizations achieve success in automating isolated parts of the operational process, such as the act of provisioning a new VM, but still require smart people with spreadsheets for higher-level processes. Automation at this level requires accurate, detailed models of existing and inbound demands, fine-grained control over supply, and policies that bring them together. The move toward software-defined is invariably coupled to the move to higher levels of automation, and a new type of policy-based control system is required to get there.

Conclusion

Software-defined infrastructure brings a new level of complexity only controllable through sophisticated analytics and purpose-built control software. The foundation of the next generation of control of IT infrastructure lies in the ability to make accurate, automated decisions that span compute, storage, network and software resources, based on the true demands of the applications. A new breed of policy-based management that fulfills this requirement is called software-defined infrastructure control.