If EMC has its way, the line between primary storage on premise and secondary and tertiary storage in the cloud is going to get a whole lot blurrier. This week, EMC unveiled a raft of updates to its storage portfolio that essentially turn EMC VMAX and VNX storage systems into hubs through which IT organizations can tier data across local systems and external cloud services.

Chris Ratcliffe, senior vice president of marketing for EMC Core Technologies, says that as storage management gets more sophisticated, IT organizations are asking vendors for ways to break down the walls that currently separate various storage systems, and the cloud services that they increasingly rely on to back up and archive data at much lower costs than storing it on premise.

With that goal in mind, EMC has enhanced its FAST.X tiering software and EMC VPLEX cloud-tiering software to add support for the EMC CloudArray software that’s used to connect to external clouds and third-party storage systems running inside or outside of the same data center.

Public cloud services supported by EMC include VMware vCloud Air, Microsoft Azure, Amazon S3 and Google Cloud Platform.

EMC is releasing new solutions across its entire storage and data protection portfolio. These include:

- A faster version of the CloudBoost software that it uses to extend its Data Domain backup and recovery storage systems to the cloud.

- An enhanced version of Spanning that extends restore and security capabilities and that includes a new European data destination option that helps organizations comply with European data sovereignty laws and regulations.

- An update of the Data Domain operating system with enhanced capacity management, secure multi-tenancy and a denser configuration that dramatically reduces total cost of ownership.

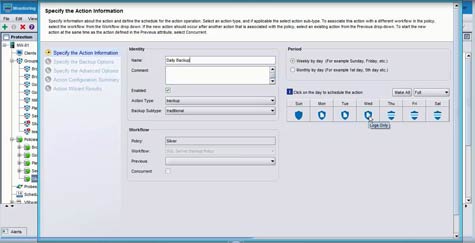

- A new version of its NetWorker 9 data protection software that adds a universal policy engine for automating the data protection process regardless of where the data resides.

Those announcements come on the heels of EMC’s launch last week of updates to its scale-out network attached storage (NAS) storage system that also added integration with public cloud services.

Ultimately, Ratcliffe says, it’s becoming clear that IT organizations are less interested in managing individual storage boxes than they are in wanting to understand what storage resources are available at a high level. Armed with that information, organizations can then assign a policy to a particular set of data that will result in the data being automatically stored in the most optimal location.

It might take a while for most IT organizations to reach that level of data management maturity across a hybrid cloud computing environment. But it’s become apparent that as storage becomes more software defined, the actual physical location of a particular set of data may not be as important as simply knowing how long it will take to actually access it.