Figuring out exactly what any application needed in terms of storage capacity over the long haul was historically more art than science. The trouble was that given the high margin for error, a lot of organizations routinely overprovisioned the amount of storage they required. After all, it’s generally less of a sin to spend too much on storage than it is to see application performance suddenly drop for one unexplained reason or another.

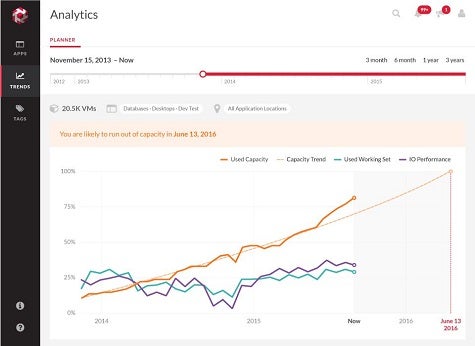

As of this week, however, Tintri says it is looking to take the guesswork out of storage capacity management on its arrays via the preview of a predictive analytics application the company will make available next year.

Tintri this week also expanded the number of virtual machines it can integrate with via support for Citrix XenServer, while adding an entry-level, all-Flash array to its portfolio of storage arrays.

Chuck Dubuque, senior director of product marketing at Tintri, says that because Tintri is tightly integrated with all the virtual machine platforms it supports, the visibility that Tintri Analytics will provide into the overall environment will be much greater than any standalone predictive analytics application. Tintri already supports VMware vSphere, Microsoft Hyper-V, Red Hat Enterprise Virtualization and OpenStack, all of which Tintri arrays are designed to be able to support concurrently.

That’s significant, says Dubuque, because it’s clear that there are now more heterogeneous virtual machine environments than ever trying to access multiple classes of persistent storage mechanisms.

In fact, with the latest release of the Tintri operating system, Tintri is now also adding support for VMware vSphere Virtual Volumes (VVols) as well as support for SyncVM File-level restore, WMI Path Encryption, snapshot enhancements, and PowerShell cmdLets in Microsoft Hyper-V environments.

As storage continues to evolve, it’s clear that the days when storage administrators spent their time manually optimizing the storage of data are coming to a close. Now we’ll see increased reliance on analytics and automation to handle those tasks. That doesn’t necessarily mean there won’t be a need for storage administrators going forward. But it does mean that not only will the amount of data that storage administrators will be expected to manage increase, so too will the accuracy in terms of determining the exact amount of storage resources any application needs regardless of what it happens to be running on at any given time.