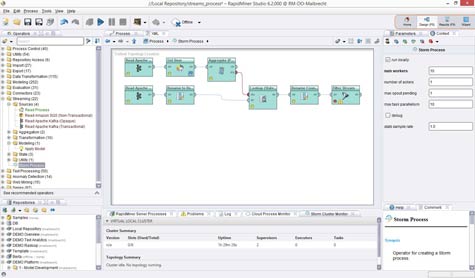

In terms of Big Data, the open source Apache Storm project has been picking up a lot of momentum because it provides a way to analyze massive amount of streaming data in memory. Now RapidMiner is making it possible for its RapidMiner Streams predictive analytics application to make sense of all that Apache Storm data.

In addition, RapidMiner has announced that it now provides connectors to Qlik business intelligence software, Apache Solr search engines, and Mozenda screen scraping software. Its RapidMiner Server platform also now comes with applications that can be invoked by analysts without requiring any additional coding.

RapidMiner CEO Ingo Mierswa says that with more data moving into Apache Storm clusters, it makes sense to shift the locus of where predictive analytics is taking place to where that data is being captured in real time.

Often associated with Hadoop, the Apache Storm project pulls data from Hadoop or any other source into a distributed framework for processing massive streams of data, which is highly flexible in terms of when and where data actually gets processed. At the core of the system is a master node dubbed Nimbus, which keeps track of what jobs have been partitioned across a distributed Apache Storm cluster.

Because Apache Storm is better suited to processing data in real time than a Hadoop architecture that was originally designed for batch processing of large jobs, Mierswa says the laws of data gravity are starting to pull predictive analytics in the direction of Apache Storm.

As investments in all types of analytics continue to increase and many organizations are pondering the need to appoint a chief analytics officer, it’s becoming apparent that how data is going to be captured and processed cost-effectively has become of paramount importance to the IT organization. The challenge, of course, is finding a way to then process all the data in a manner that turns it into valuable information that the business can actually act on in a timely manner.