Eight Ways to Put Hadoop to Work in Any IT Department Replicating data across a wide area network (WAN) is generally considered too expensive and time consuming to be taken lightly by most IT organizations. The rise of Big Data naturally exacerbates that challenge. For that reason, WANdisco created replication software for Hadoop environments that […]

Eight Ways to Put Hadoop to Work in Any IT Department

Replicating data across a wide area network (WAN) is generally considered too expensive and time consuming to be taken lightly by most IT organizations. The rise of Big Data naturally exacerbates that challenge.

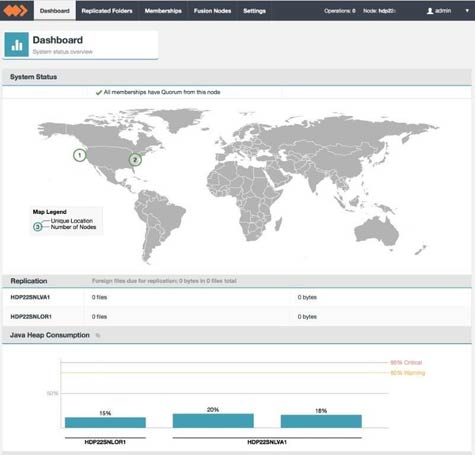

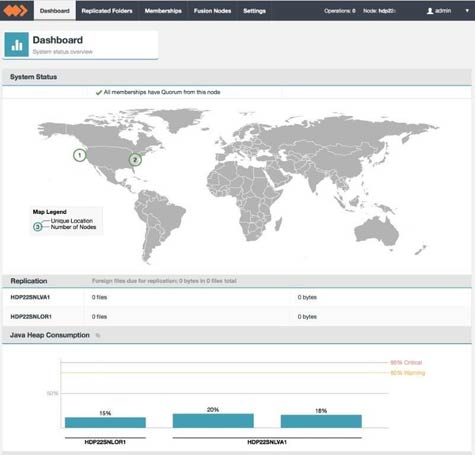

For that reason, WANdisco created replication software for Hadoop environments that makes sure all the servers and clusters deployed across multiple date centers are fully readable and writeable, always in sync, and recover automatically from each other. Now WANdisco is extending the capabilities of the core WANdisco Fusion Platform via six plug-in modules that address everything from disaster recovery to replicating Hadoop data into the cloud.

Jim Campigli, chief product officer for WANdisco, says that as Hadoop deployments become more distributed, IT organizations are going to need to actively manage multiple deployments of Hadoop clusters. To address that issue, Campigli says many of them will need to find a way to cost-effectively keep Hadoop clusters synchronized with one another across a WAN.

To facilitate that process, Campigli says, WANdisco decided to create plug-in modules to address specific types of Hadoop scenarios such as replicating Hive and Hbase data. In addition, WANdisco is now making available a Fusion software development kit (SDK) that enables IT organizations to create their plug-in module for the WANdisco Fusion Platform.

The WANdisco Fusion Platform itself is based on a Distributed Coordination Engine (DCE), which is a commercial implementation of a Paxos algorithm that creates a peer-to-peer architecture for replicating data where there is no single point of failure.

Regardless of how Hadoop is being used, the one thing that is clear is that Hadoop is not only being used more commonly in production environments, more groups within an organization are deploying applications on top of Hadoop. As such, it’s only a matter of time before most organizations will need to share data between different Hadoop clusters.

The networking issue that most organizations face today is that, while the amount of data they need to manage has increased by several times over, the amount of available bandwidth on the WAN has not materially increased for most of them. Given the cost of acquiring that additional bandwidth, it’s already apparent that new approaches to replicating massive amounts of data across the WAN will be required.

MV

Michael Vizard is a seasoned IT journalist, with nearly 30 years of experience writing and editing about enterprise IT issues. He is a contributor to publications including Programmableweb, IT Business Edge, CIOinsight and UBM Tech. He formerly was editorial director for Ziff-Davis Enterprise, where he launched the company’s custom content division, and has also served as editor in chief for CRN and InfoWorld. He also has held editorial positions at PC Week, Computerworld and Digital Review.