Moving massive amounts of data around the enterprise in support of Big Data projects is one of the most painstaking tasks an IT organization can be asked to manage. Each step takes a fair amount of time because by and large IT staffs are working with lower-level protocols. Advanced Systems Concepts, Inc. (ASCI) this week […]

Moving massive amounts of data around the enterprise in support of Big Data projects is one of the most painstaking tasks an IT organization can be asked to manage. Each step takes a fair amount of time because by and large IT staffs are working with lower-level protocols.

Advanced Systems Concepts, Inc. (ASCI) this week moved to automate the movement of that data with the release of an update to its ActiveBatch workflow software.

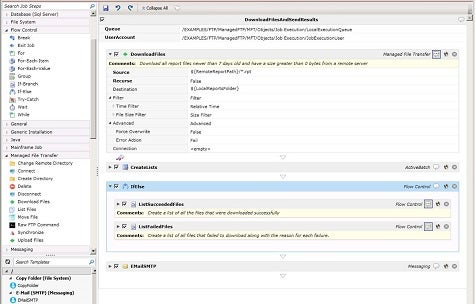

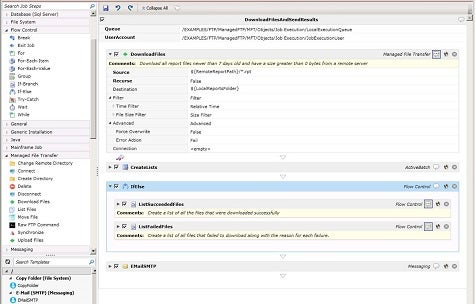

Mehul Amin, director of engineering for ASCI, says version 11 of ActiveBatch applies an event-driven automation framework to moving data in and out of various components of a Hadoop cluster, including HDFS, Hive, MapReduce, Spark, HBase and Pig. As part of the process, IT organizations can employ reusable templates and schedule data transfers in advance.

“It’s about automating the workflow involving Big Data,” says Amin.

Other new features in ActiveBatch 11 include support for managed file transfers as well as support for FTP Triggers for automating the transfer of files. Just as importantly, the latest release also includes tools for rolling back the transfer of those files.

One of the dirty little secrets about Big Data is that most organizations find themselves spending more time on plumbing issues than they do analyzing all the data they collect. Automating the workflow around these projects should go a long way to reducing the mean time to value between when a Big Data project gets launched and actionable intelligence gets delivered.

MV

Michael Vizard is a seasoned IT journalist, with nearly 30 years of experience writing and editing about enterprise IT issues. He is a contributor to publications including Programmableweb, IT Business Edge, CIOinsight and UBM Tech. He formerly was editorial director for Ziff-Davis Enterprise, where he launched the company’s custom content division, and has also served as editor in chief for CRN and InfoWorld. He also has held editorial positions at PC Week, Computerworld and Digital Review.